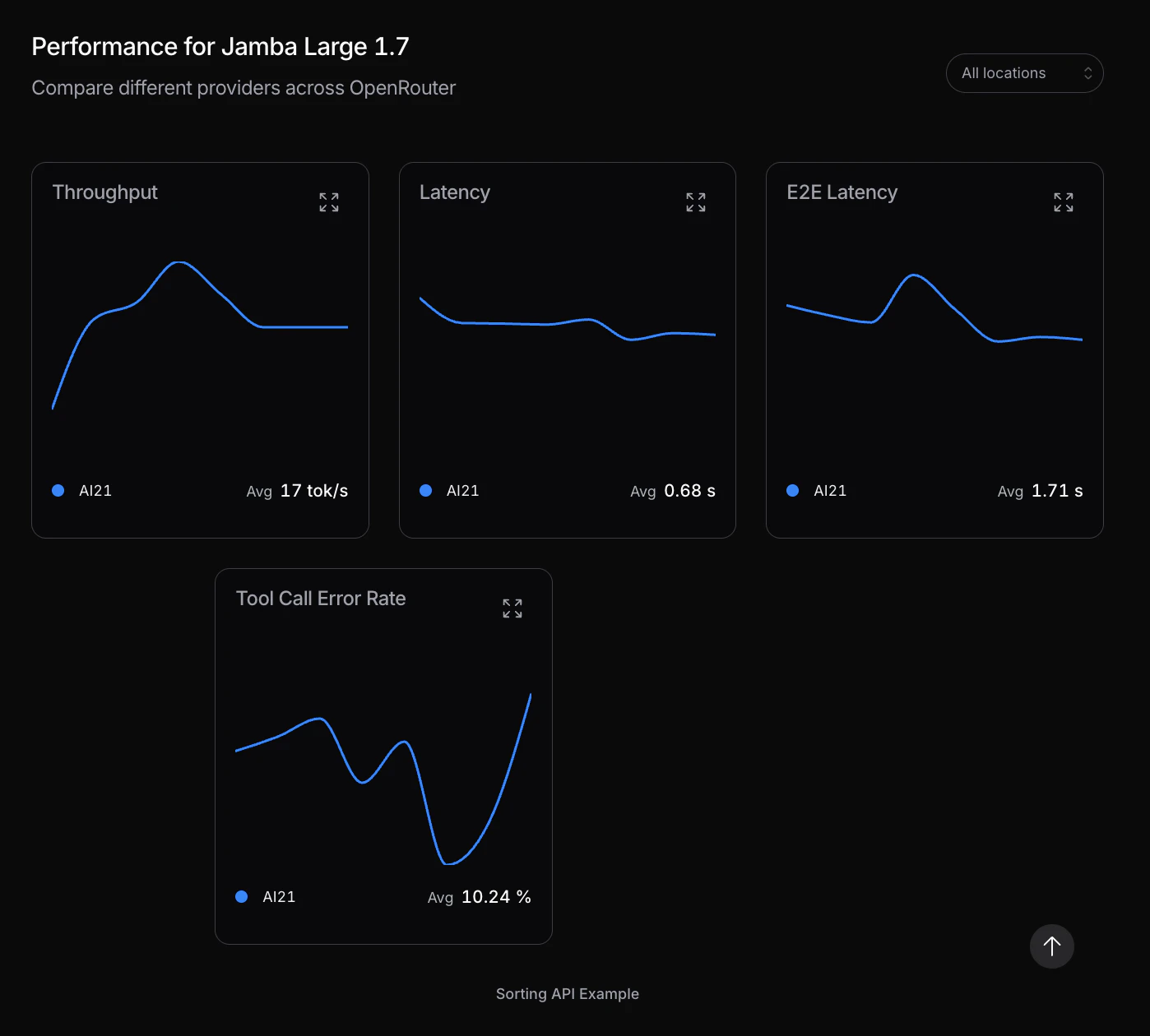

Jamba by AI21 Labs

ストックにはログインが必要です

The open-source LLM that runs 800pg of context at 2.5x speed

Artificial Intelligence

Open Source

Software Engineering

Most LLMs choke on long context. Jamba doesn't. Built on a hybrid Mamba-Transformer architecture, it handles 256K tokens at 2.5x the speed fits on a single 80GB GPU, fully open-source, and actually validated on benchmarks.

投票数: 0